Java教程

一条爬虫抓取一个小网站所有数据

本文主要是介绍一条爬虫抓取一个小网站所有数据,对大家解决编程问题具有一定的参考价值,需要的程序猿们随着小编来一起学习吧!

一条爬虫抓取一个小网站所有数据

今天闲来无事,写一个爬虫来玩玩。在网上冲浪的时候发现了一个搞笑的段子网,发现里面的内容还是比较有意思的,于是心血来潮,就想着能不能写一个Python程序,抓取几条数据下来看看,一不小心就把这个网站的所有数据都拿到了。

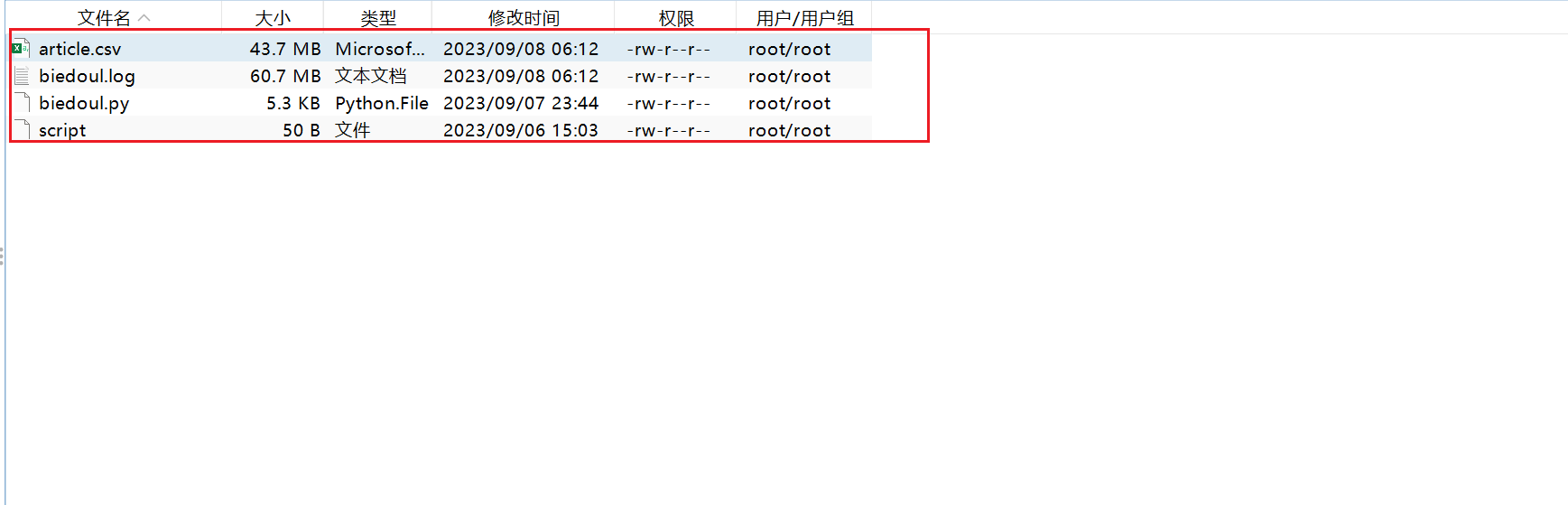

这个网站主要的数据都是详情在HTML里面的,可以采用lxml模块的xpath对HTML标签的内容解析,获取到自己想要的数据,然后再保存在本地文件中,整个过程是一气呵成的。能够抓取到一页的数据之后,加一个循环就可以抓取到所有页的数据,下面的就是数据展示。

废话少说,直接上Python代码

import requests

import csv

from lxml import etree

import time

class Page:

def __init__(self):

self.pre_url = "https://www.biedoul.com"

self.start_page = 1

self.end_page = 15233

def askHTML(self, current_page, opportunity):

print(

"=============================== current page => " + str(current_page) + "===============================")

try:

pre_url = self.pre_url + "/index/" + str(current_page)

page = requests.get(url=pre_url)

html = etree.HTML(page.content)

articles = html.xpath('/html/body/div/div/div/dl')

return articles

except Exception as e:

if opportunity > 0:

time.sleep(500)

print(

"=============================== retry => " + str(opportunity) + "===============================")

return self.askHTML(current_page, opportunity - 1)

else:

return None

def analyze(self, articles):

lines = []

for article in articles:

data = {}

data["link"] = article.xpath("./span/dd/a/@href")[0]

data["title"] = article.xpath("./span/dd/a/strong/text()")[0]

data["content"] = self.analyze_content(article)

picture_links = article.xpath("./dd/img/@src")

if (picture_links is not None and len(picture_links) > 0):

# print(picture_links)

data["picture_links"] = picture_links

else:

data["picture_links"] = []

# data["good_zan"] = article.xpath("./div/div/a[@class='pinattn good']/p/text()")[0]

# data["bad_bs"] = article.xpath("./div/div/a[@class='pinattn bad']/p/text()")[0]

data["good_zan"] = self.analyze_zan(article, "good")

# article.xpath("./div/div/a[@class='pinattn good']/p/text()")[0]

data["bad_bs"] = self.analyze_zan(article, "bad")

# article.xpath("./div/div/a[@class='pinattn bad']/p/text()")[0]

lines.append(data)

return lines

# 解析文章内容

def analyze_content(self, article):

# 1. 判断dd标签下是否为文本内容

content = article.xpath("./dd/text()")

if content is not None and len(content) > 0 and not self.is_empty_list(content):

return content

content = []

p_list = article.xpath("./dd")

for p in p_list:

# 2. 判断dd/.../font标签下是否为文本内容

if len(content) <= 0 or content is None:

fonts = p.xpath(".//font")

for font_html in fonts:

font_content = font_html.xpath("./text()")

if font_content is not None and len(font_content) > 0:

content.append(font_content)

# 3. 判断dd/.../p标签下是否为文本内容

if len(content) <= 0 or content is None:

fonts = p.xpath(".//p")

for font_html in fonts:

font_content = font_html.xpath("./text()")

if font_content is not None and len(font_content) > 0:

content.append(font_content)

return content

def analyze_zan(self, article, type):

num = article.xpath("./div/div/a[@class='pinattn " + type + "']/p/text()")

if num is not None and len(num) > 0:

return num[0]

return 0

def do_word(self):

fieldnames = ['index', 'link', 'title', 'content', 'picture_links', 'good_zan', 'bad_bs']

with open('article.csv', 'a', encoding='UTF8', newline='') as f:

writer = csv.DictWriter(f, fieldnames=fieldnames)

# writer.writeheader()

for i in range(self.start_page, self.end_page):

articles = self.askHTML(i, 3)

if articles is None:

continue

article_list = self.analyze(articles)

self.save(writer, article_list)

# 保存到文件中

def save(self, writer, lines):

print("##### 保存中到文件中...")

# python2可以用file替代open

print(lines)

writer.writerows(lines)

print("##### 保存成功...")

def is_empty_list(self, list):

for l in list:

if not self.empty(l):

return False

return True

def empty(self, content):

result = content.replace("\r", "").replace("\n", "")

if result == "":

return True

return False

# 递归解析文章内容

def analyze_font_content(self, font_html, depth):

content = []

print(depth)

font_content_list = font_html.xpath("./font/text()")

if font_content_list is not None and len(font_content_list) > 0 and not self.is_empty_list(font_content_list):

for font_content in font_content_list:

content.append(font_content)

else:

if depth < 0:

return []

return self.analyze_font_content(font_html.xpath("./font"), depth - 1)

return content

if __name__ == '__main__':

page = Page()

page.do_word()

在运行下面的代码之前,需要先按照好requests、lxml两个模块,安装命令为:

pip installl requests pip install lxml

这篇关于一条爬虫抓取一个小网站所有数据的文章就介绍到这儿,希望我们推荐的文章对大家有所帮助,也希望大家多多支持为之网!

您可能喜欢

-

这代码注释给我看破防了……07-29

-

提供个性化内容的15个最佳IP地理定位API07-29

-

新课终于做完了...07-29

-

测试工程师在敏捷项目中扮演什么角色?07-27

-

逆天的数学 | 数学科普07-27

-

纪录片|数学漫步之旅07-27

-

高中概率学习中如何刻画复杂的事件07-27

-

向量的投影与投影向量07-27

-

二项式定理相关问题07-27

-

Adobe国际认证(证书)认证价值详解07-26

-

01.计算机组成原理和结构07-25

-

城域网07-25

-

为什么API经济在经济不确定时期表现突出07-25

-

Python实现Java mybatis-plus 产生的SQL自动化测试SQL速度和判断SQL是否走索引07-24

-

轻松获取天气信息:免费天气API一览07-24

栏目导航