区块链技术

聊聊部署在不同K8S集群上的服务如何利用nginx-ingress进行灰度发布

前言

之前有篇文章聊聊如何利用springcloud gateway实现简易版灰度路由,里面的主人公又有一个需求,他们有个服务是没经过网关的,而是直接通过nginx-ingress暴露出去,现在这个服务也想做灰度,他知道在同个集群如何利用nginx-ingress进行灰度发布,但是现在这个服务是部署在新的集群,他查了不少资料,都没查到他想要的答案,于是就和我交流了一下,看我这边有没有什么实现思路,今天就来聊下这个话题:不同K8S集群上的服务如何利用nginx-ingress进行灰度发布

前置知识

nginx-ingress自身能提供哪些灰度能力?

首先nginx-ingress是通过配置注解(Annotations)来实现灰度能力。当配置nginx.ingress.kubernetes.io/canary属性值为true时,开启灰度功能,如果为false,则不开启。

nginx-ingress默认支持的灰度规则如下

-

nginx.ingress.kubernetes.io/canary-by-header基于Header的流量切分,适用于灰度发布。如果请求头中包含指定的header名称,并且值为“always”,就将该请求转发给Canary Ingress定义的对应后端服务。如果值为“never”则不转发,可用于回滚到旧版本。如果为其他值则忽略该annotation,并通过优先级将请求流量分配到其他规则。

-

nginx.ingress.kubernetes.io/canary-by-header-value必须与canary-by-header一起使用,可自定义请求头的取值,包含但不限于“always”或“never”。当请求头的值命中指定的自定义值时,请求将会转发给Canary Ingress定义的对应后端服务,如果是其他值则忽略该annotation,并通过优先级将请求流量分配到其他规则。

-

nginx.ingress.kubernetes.io/canary-by-header-pattern与canary-by-header-value类似,唯一区别是该annotation用正则表达式匹配请求头的值,而不是某一个固定值。如果该annotation与canary-by-header-value同时存在,该annotation将被忽略。

-

nginx.ingress.kubernetes.io/canary-by-cookie基于Cookie的流量切分,适用于灰度发布。与canary-by-header类似,该annotation用于cookie,仅支持“always”和“never”,无法自定义取值。

-

nginx.ingress.kubernetes.io/canary-weight基于服务权重的流量切分,适用于蓝绿部署。表示Canary Ingress所分配流量的百分比,取值范围[0-100]。例如,设置为100,表示所有流量都将转发给Canary Ingress对应的后端服务。

-

nginx.ingress.kubernetes.io/canary-weight-total基于设定的权重总值。若未设定总值,默认总值为100。

注: 不同灰度规则优先级由高到低为::canary-by-header -> canary-by-cookie -> canary-weight

更多灰度规则配置信息,可以查看官网

https://kubernetes.github.io/ingress-nginx/user-guide/nginx-configuration/annotations/#canary

同集群利用ingress进行灰度示例

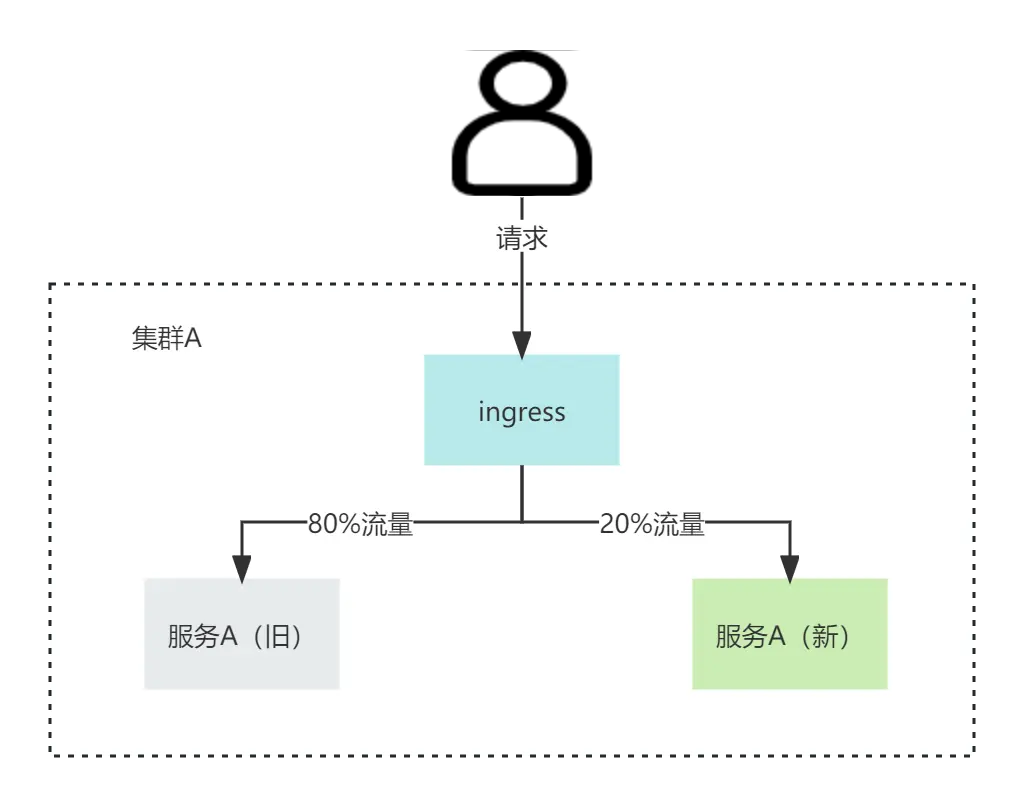

注: 以服务权重的流量切分为例,实现的效果如图

实现步骤如下

1、配置旧服务相关的deployment 、service、ingress

apiVersion: apps/v1

kind: Deployment

metadata:

name: svc-old

labels:

app: svc-old

spec:

replicas: 1

selector:

matchLabels:

app: svc-old

template:

metadata:

labels:

app: svc-old

spec:

containers:

- name: svc-old

imagePullPolicy: Always

image: lybgeek.harbor.com/lybgeek/svc-old:v1

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: svc-old

spec:

type: ClusterIP

selector:

app: svc-old

ports:

- port: 80

targetPort: 80

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: svc-old

spec:

rules:

- host: lybgeek.svc.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: svc-old

port:

number: 80

2、配置新服务相关的deployment 、service、ingress

apiVersion: apps/v1

kind: Deployment

metadata:

name: svc-new

labels:

app: svc-new

spec:

replicas: 1

selector:

matchLabels:

app: svc-new

template:

metadata:

labels:

app: svc-new

spec:

containers:

- name: svc-new

imagePullPolicy: Always

image: lybgeek.harbor.com/lybgeek/svc-new:v1

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: svc-new

spec:

type: ClusterIP

selector:

app: svc-new

ports:

- port: 80

targetPort: 80

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "20"

name: svc-new

spec:

rules:

- host: lybgeek.svc.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: svc-new

port:

number: 80

核心配置:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "20"

该配置的意思是将20%的流量打到新服务

3、测试

[root@master ~]# for i in {1..10}; do curl http://lybgeek.svc.com; done;

svc-old

svc-old

svc-old

svc-new

svc-old

svc-old

svc-old

svc-old

svc-new

svc-old

可以看出大概有20%的比例打到新服务

不同集群利用ingress进行灰度示例

实现核心点如图

[图片上传失败…(image-31bb07-1701743230791)]

其实就是多加了一台nginx服务器,通过nginx再转发到新服务

步骤如下

1、旧服务同之前配置

略

2、新增nginx相关deployment,service、ingress配置

apiVersion: apps/v1

kind: Deployment

metadata:

name: svc-nginx

spec:

selector:

matchLabels:

app: svc-nginx

template:

metadata:

labels:

app: svc-nginx

spec:

containers:

- image: lybgeek.harbor.com/lybgeek/nginx:1.23.2

name: svc-nginx

ports:

- containerPort: 80

volumeMounts:

- mountPath: /etc/nginx/conf.d/api.conf

name: vol-nginx

subPath: api.conf

restartPolicy: Always

volumes:

- configMap:

name: nginx-svc

name: vol-nginx

---

apiVersion: v1

kind: Service

metadata:

name: svc-nginx

spec:

type: ClusterIP

selector:

app: svc-nginx

ports:

- port: 80

targetPort: 80

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "20"

name: svc-nginx

spec:

rules:

- host: lybgeek.svc.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: svc-nginx

port:

number: 80

nginx.conf的核心相关配置如下

server {

listen 80;

gzip on;

gzip_min_length 100;

gzip_types text/plain text/css application/xml application/javascript;

gzip_vary on;

underscores_in_headers on;

location / {

proxy_pass http://lybgeek.svcnew.com/;

proxy_set_header Host lybgeek.svcnew.com;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forward-For $http_x_forwarded_for;

proxy_pass_request_headers on;

proxy_next_upstream off;

proxy_connect_timeout 90;

proxy_send_timeout 3600;

proxy_read_timeout 3600;

client_max_body_size 10m;

}

}

核心配置

proxy_pass http://lybgeek.svcnew.com/; proxy_set_header Host lybgeek.svcnew.com;

核心配置其实就是路由到新的服务

3、测试

[root@master ~]# for i in {1..10}; do curl http://lybgeek.svc.com; done;

svc-old

svc-old

svc-old

svc-old

svc-old

svc-new

svc-old

svc-old

svc-new

可以看出大概有20%的比例打到新服务

总结

本文主要还是借助ingress本身提供的灰度能力,至于不同集群的灰度,其实是通过多加一层来实现,很多时候做方案设计,如果没思路,可以先通过加一层来推演。当然如果公司已经上了servicemesh,直接用mesh就可以提供强大的灰度能力,最后ingress其他灰度能力,大家可以通过官网或者下方提供的链接学习一下

https://help.aliyun.com/zh/ack/ack-managed-and-ack-dedicated/user-guide/use-the-nginx-ingress-controller-for-canary-releases-and-blue-green-deployments-1

-

Kubernetes生产环境问题排查指南:实战教程12-21

-

使用Encore.ts构建和部署TypeScript微服务到Kubernetes集群12-20

-

Kubernetes:从理念到1.0的历程12-20

-

第28天:Kubernetes中的蓝绿部署讲解12-18

-

从零到Kubernetes安全大师:简化集群安全防护12-15

-

掌握Kubernetes节点调度:污点、容忍、节点选择器和节点亲和性12-15

-

第五天:与容器互动12-14

-

CKA(Kubernetes管理员认证)速查表12-11

-

.NET Aspire应用部署到Azure和Kubernetes实战指南12-08

-

云原生周报:K8s未来三大发展方向不容错过12-07

-

《 Kubernetes开发者的书评:从入门到生产实战》12-07

-

Longhorn: Rancher 推出的 Kubernetes 云原生存储解决方案12-06

-

为Amazon EKSBlueprints for CDK项目贡献代码指南12-04

-

KubeShark:Kubernetes集群的网络流量监控神器,就像Wireshark一样好用!12-02

-

云原生周报:Knative 1.15版本发布啦!12-02